An overview of some of the key requirements under the EU AI Act, and what you need to do to get compliant.

•

•

8 min read time

Topics

On 22 January 2024, the final text of the European Union AI Act (the “AIA”) was leaked to the public. It introduces a lot of new obligations on the entire AI ecosystem and with big penalties for non-compliance, it’s time for organisations to get to work.

This blog will guide you through some of the key things you need to consider to ensure your organisation is compliant.

The obligations

Article 16 sets out the key obligations on the providers of high-risk AI systems. The crux of these compliance obligations for organisations lies in establishing a quality management system, a risk management system, preparing and maintaining detailed technical documentation and conducting conformity assessments. We’re going to break down these requirements in turn to provide some practical advice that organisations can use to get up to speed with what they need to do.

Although the legal requirement to comply with the obligations set out in this blog attaches primarily to those AI systems which fit the EU’s definition of high-risk, this does not mean that AI systems which don’t fall under these specifications won’t also pose a substantial risk to your business (or that they may later be caught by the definition as they evolve). The AIA encourages organisations to voluntarily adopt the application of specific requirements of the legislative framework and from our perspective, we think it makes sense to do so to ensure that any and all forms of risk are managed successfully.

What is a quality management system under the EU AI Act?

One of the first things you can do on your journey towards compliance is to set up your AI quality management system (“QMS”). The purpose of the QMS is to ensure that your organisation is well prepared to manage the risks that AI can pose. It’s overarching and should take the form of policies, procedures and instructions that establish the rules on how your organisation interacts with AI.

The QMS must include, amongst other things, the following:

a strategy for regulatory compliance (and crucially it must also include plans and procedures to deal with modifications to the AI system and/or the regulations);

requirements around risk management systems (see below);

detailed technical documentation (again, see below);

techniques for design, design control and design verification;

quality control and quality assurance procedures;

systems and procedures around data management; and

the establishment, implementation, and maintenance of a post-market monitoring system to ensure the AI system remains compliant with the AIA throughout its lifetime.

As a first step, you should look to establish an AI policy to cover off the points listed above. We have prepared a free and comprehensive guide on how you can draft these AI policies which is accessible here.

What is a risk management system under the EU AI Act?

The QMS obligations require that organisations adopt a risk management system (“RMS”) in respect of their AI.

There is a key but subtle difference here between the QMS and the RMS – a QMS covers how you manage risk and comply with regulations across your organisation, whereas an RMS covers how you manage risk and comply with regulations in respect of an individual AI system.

The RMS is a continuous, iterative process that runs throughout the entire lifecycle of a high-risk AI system and requires systematic review and updating. It shall involve the following steps:

identification and analysis of the reasonably foreseeable risks that the AI system can pose to health, safety or fundamental rights when it is used in accordance with its intended purpose;

an estimation and evaluation of the risks that may emerge when the AI system is used under conditions of reasonably foreseeable misuse;

evaluation of risks based on the analysis of data gathered from the post-market monitoring system; and

adoption of appropriate and targeted risk management measures designed to address all of the risk identified in accordance with the above requirements.

Ultimately, the risks identified through the RMS must be such that any relevant residual risk associated with each hazard, as well as the overall residual risks of the high-risk AI system, are judged to be acceptable. Where appropriate, adequate mitigation and control measures should be adopted where risks cannot be eliminated. There are also additional requirements around testing, and additional considerations around the impact of the system on persons under the age of 18.

What are the new documentation obligations?

The QMS requirements also specify that organisations need to draw up technical documentation in respect of a high risk AI system before that system is placed on the market. Annex IV of the act sets out the specific things that the documentation should cover. Ultimately, the documentation must be sufficient to provide competent authorities and notified bodies with the necessary information to form a clear and comprehensive view of the compliance of the AI system with the requirements of the AIA. This includes all documentation around the QMS and the RMS, and the technical documentation in respect of your system.

Did you know some of these documentation requirements can be automated using our AI governance solution? Read more about how our controls feature can automate this for you here and get in touch and find out more.

The role of standards

Standards are going to play a central role in AIA compliance and at Enzai, we are already starting to see the market coalesce around certain frameworks. The National Institute for Standards in Technology (NIST) released their AI Risk Management Framework and we have worked with a number of organisations to develop frameworks that comply with these requirements. In late 2023, the International Standards Organisation (ISO) and the International Electronics Commission (IEC) introduced their 42001 standard and we’re also working with organisations to implement this. Note that because these standards have not yet been adopted by the EU, alone they won’t guarantee compliance. The AIA specifies that where the requirements of the AIA are not applied in full, or do not cover all of the relevant requirements set out in the AIA, an organisation must ensure its QMS incorporates all of the technical legislative specifications.

There will be a mechanism by which certain standards can be approved by the EU and there will be a presumption of conformity to the AIA if an organisation can demonstrate it meets these standards. CEN-CENELEC (the European Committee for Electrotechnical Standardisation) established the Joint Technical Committee 21 “Artificial Intelligence” to prepare a set of harmonised standards. While, as of the time of writing in January 2024, there is no specified release date for these standards (nor for their approval by the EU), we expect to see a publication date in the second half of the year given the important role these standards will play in AIA compliance.

What is a conformity assessment?

A conformity assessment does exactly what it says on the tin – it is an assessment to determine whether or not an AI system is in conformity with the AIA. The procedure for assessing conformity depends on a couple of variables. For example, as mentioned above, there is a presumption of conformity if an AI system can demonstrate compliance with a set of harmonised standards. For certain types of systems, the conformity assessment can be conducted on internal controls and for others, it will involve a review by an external notified body.

A certification process will also be established and approved systems may affix the CE mark to their products. Further, most types of high-risk AI systems will have to be registered in a central EU database before they are placed into service.

Some of these operational mechanisms will take the EU a bit of time to establish. They are coming though and by establishing your QMS, you can ensure you’re ready to go.

… Fear not, we can help

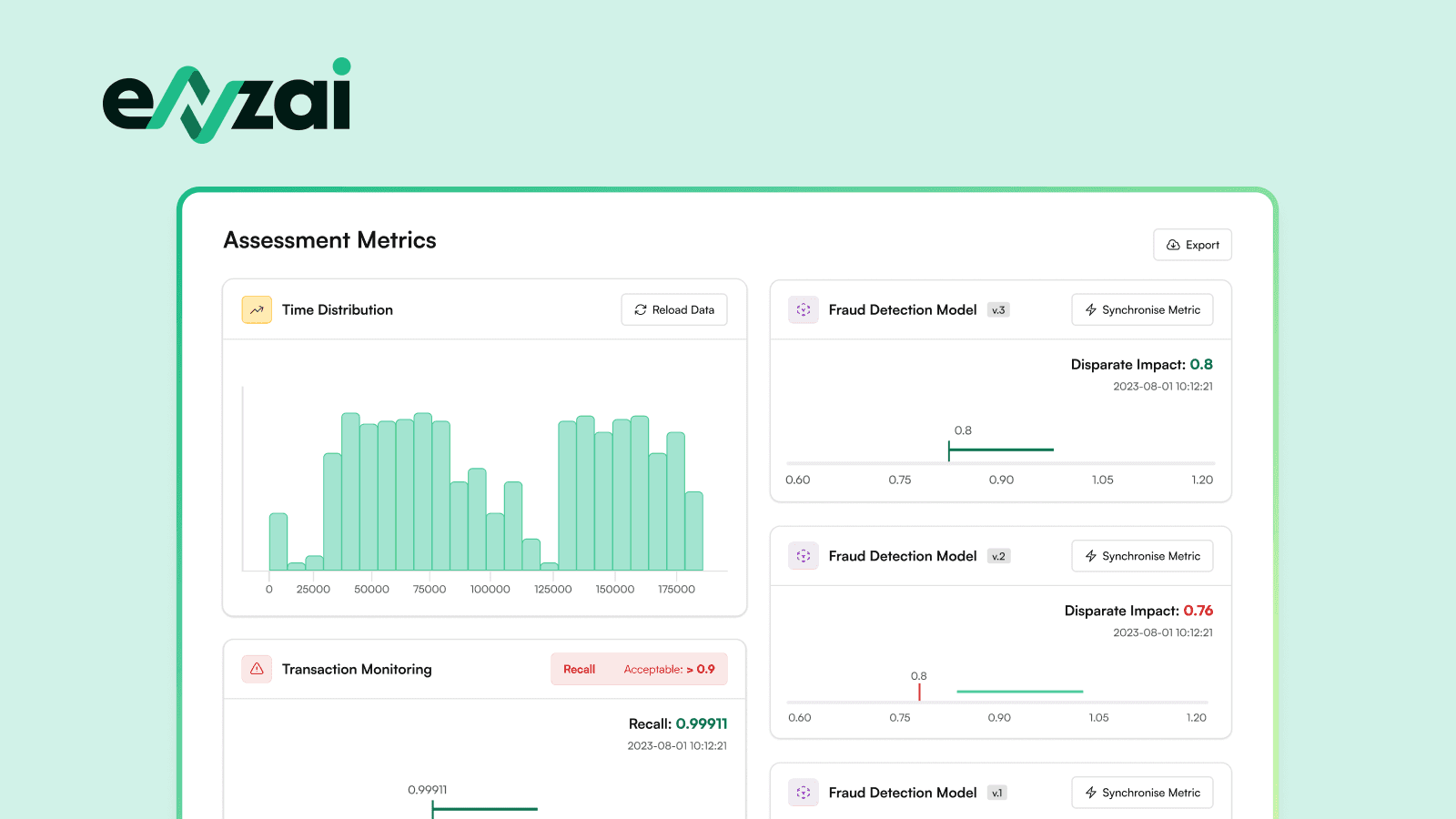

All of these new requirements may seem slightly overwhelming but we are here to help. Our AI governance and risk management solution has been designed from the ground up to comply with all of these obligations out of the box. Our software platform is that quality management system required under the AIA, and our feature set ensures that you can roll out a risk management system in respect of all of your AI systems quickly and efficiently with nothing falling through the gaps. We’ve designed the system to ensure that even when you’re working with standards that might not get you fully compliant, your QMS on Enzai will be easy to adopt and have everything covered off.

To find out more on how we can help, please do get in touch here.

Enzai is the leading enterprise AI governance platform, purpose-built to help organizations transition from abstract policy to operational oversight. Our AI risk management platform provides the specialized infrastructure required to manage agentic AI governance, maintain a comprehensive AI inventory, and ensure EU AI Act compliance. By automating complex workflows, Enzai empowers enterprises to scale AI adoption with confidence while maintaining alignment with global standards like ISO 42001 and NIST.

Empower your organization to adopt, govern, and monitor AI with enterprise-grade confidence. Built for regulated organizations operating at scale.